Why Test Coverage Is the Wrong Metric

Test coverage tells you how much of your code is touched by tests. It says nothing about whether your product does what it's supposed to do. You can have 90% coverage and still ship broken requirements — because coverage measures code paths, not user-facing behavior. The metric that actually matters is requirements-verified%: the percentage of stated product requirements that have a continuously-running check.

Key Takeaways

Coverage% measures code, not requirements. A test that touches a line of code counts toward coverage. Whether that test verifies a user-facing requirement is irrelevant to the metric.

You can have 100% coverage with broken requirements. If a requirement never became code, tests can't cover it. Coverage is blind to missing implementation.

Requirements-verified% is the honest metric. Count your stated requirements. Count the ones with running checks. That ratio tells you what you actually know about your product.

The shift is from "did we test the code we wrote" to "did we verify the product we promised." These are different questions. One is answered by a coverage report. The other requires a requirements harness.

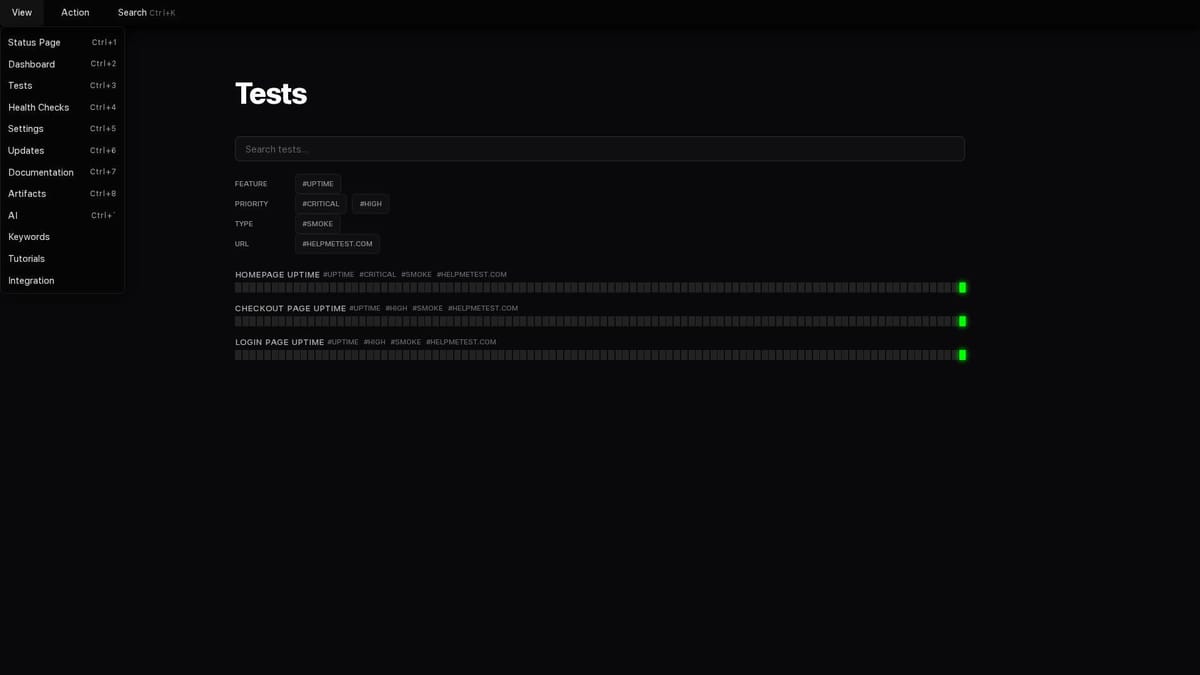

HelpMeTest makes requirements-verified% measurable. Every Artifact requirement maps to a running check. Pass rate on requirements checks is your real QA score.

The Metric Everyone Uses and Why It Fails

Test coverage is the default measure of software quality. Engineering teams set coverage gates. PRs get blocked if coverage drops. Management dashboards show coverage percentages. The implicit promise: higher coverage = safer software.

The problem is simple and fundamental: test coverage measures code, not requirements.

When a test runs a line of code, that line counts as "covered." The test could be checking anything — or nothing meaningful. The line is covered whether the test verifies important behavior or just exercises a code path to hit the metric.

Here's a concrete example. Say you have this code:

function addToCart(item) {

if (item.inStock) {

cart.items.push(item)

localStorage.setItem('cart', JSON.stringify(cart))

updateHeaderCount()

}

}And this test:

test('addToCart runs without error', () => {

const item = { id: 1, name: 'Widget', inStock: true }

expect(() => addToCart(item)).not.toThrow()

})This test touches every line of the addToCart function. Coverage: 100% for this file. The test:

- Does not verify the item is actually in the cart after calling

- Does not verify localStorage was updated

- Does not verify the header count changed

- Does not test the

inStock: falsepath - Does not verify cart persistence across page loads

Four requirements potentially broken. Coverage: 100%.

What Coverage Actually Measures

Coverage answers one question: "which lines of code did the test suite execute?"

It does not answer:

- Do users get what we promised them?

- Are all stated requirements working?

- Is behavior correct or just present?

- Are requirements that were never coded being checked?

That last point is the fatal flaw for teams using AI coding agents. When an AI agent partially implements a feature — implements 3 of 5 requirements, misses 2 — test coverage gives you no signal. Coverage only measures the code that exists. Missing code has no coverage because it has no lines to measure.

Your requirements doc says 5 things should work. Your code implements 3. Your tests cover 100% of the code. Your requirements-verified%: 60%.

Coverage tells you 100%. Requirements-verified% tells you 60%. One of these is useful.

The Requirements Coverage Gap

Let's look at what happens across a typical AI-assisted feature development cycle.

You write a PRD with 8 requirements for a user authentication flow:

- Users can register with email and password

- Passwords must be at least 8 characters

- Email must be unique — registration fails if email already exists

- Users can log in with email and password

- Login fails after 5 incorrect attempts (account lockout)

- Users can reset password via email link

- Password reset links expire after 24 hours

- Session persists across browser restarts

You give the AI coding agent a summary of the feature. It implements: 1, 2, 4, 6. Your developer writes tests for what they see implemented. CI passes.

Requirements-verified status:

| Requirement | Implemented | Has running check |

|---|---|---|

| Register with email/password | Yes | Yes |

| Password minimum length | Yes | Yes |

| Unique email validation | No | No |

| Login with email/password | Yes | Yes |

| Account lockout after 5 attempts | No | No |

| Password reset via email | Yes | Yes |

| Reset links expire after 24h | No | No |

| Session persistence | No | No |

Code coverage: high (everything implemented is tested). Requirements-verified%: 50%.

Your CI pipeline says everything is fine. Half your auth requirements are broken.

The Shift: From Code Coverage to Requirements Verification

The reframe is straightforward but requires changing how you think about testing's purpose.

Old model: Write code → write tests for the code → measure coverage of the code.

New model: State requirements → derive checks from requirements → measure requirements verified.

The shift is in where tests come from. Code-derived tests are inherently blind to missing implementation. Requirements-derived checks are not — every requirement has a check, whether or not the implementation exists.

This is the core idea behind requirements as tests: your PRD or requirements document becomes the source of truth for what gets checked, not the code you happened to write.

The resulting metric is honest:

Requirements-verified% =

(requirements with passing running checks) / (total stated requirements)If you have 20 requirements and 15 have passing checks, your requirements-verified% is 75%. You know exactly which 5 are unverified. You can fix that gap directly — by implementing the missing behavior or by understanding why those requirements changed.

Compare to a coverage% of 85%: you know 85% of code lines are touched by tests, but you have no idea how many requirements that represents or which ones might be broken.

Why This Matters More With AI Coding Agents

Test coverage was always an imperfect proxy. In the era of AI coding agents, the gap between coverage% and actual requirement satisfaction has grown.

Pre-AI, developers sat with a feature long enough to have it in their heads. When writing tests, they had context. They'd catch most of what they'd implemented. Coverage% was still a bad metric, but it was a less wrong one.

With AI coding agents, the implementation gap widens:

- The agent works from whatever context you provided in the session

- Context you didn't provide → requirements the agent never knew about

- Requirements the agent never knew about → requirements never implemented

- Requirements never implemented → code coverage says nothing about them

The failure pattern described in What Happens When Your AI Coding Agent Skips a Requirement happens precisely because coverage% gives no signal when implementation is missing. Requirements-verified% gives immediate signal: if the requirement has a check, the check fails when implementation is absent.

This is why requirements-derived test harnesses are the correct workflow for AI-assisted development — not as a nice-to-have, but as the only mechanism that catches the specific failure mode AI agents introduce.

What Requirements-Verified% Looks Like in Practice

Here's how to actually measure it with HelpMeTest:

1. List your requirements explicitly

Requirements can't be measured if they're vague. For each feature, enumerate the concrete behaviors users are supposed to experience. The PRD to Test Harness guide walks through this step-by-step.

2. Map each requirement to a check

Every behavioral requirement gets a test. Every operational requirement gets a health check. The mapping is explicit — the test name reflects the requirement it verifies.

3. Run checks against production

Requirements-verified% is only meaningful if the checks run against the real environment. CI checks against a snapshot. Production behavior can diverge. HelpMeTest runs checks every 5 minutes against your live environment.

4. Your dashboard shows reality

In HelpMeTest, your test pass rate represents requirements currently working in production. A test failure means a requirement is broken — right now, in the environment users are using.

Tests: 18/20 passing

✅ User registration works

✅ Password validation enforced

✅ Email uniqueness checked

✅ Login succeeds with valid credentials

✅ Login fails with invalid credentials

✅ Password reset email sends

❌ Account lockout after 5 attempts ← broken requirement

✅ Session persists across restart

...Requirements-verified% today: 90%. One requirement broken. You know exactly which one.

This is information you can act on. A coverage report at 85% doesn't tell you anything actionable.

The Coverage Metric Isn't Useless — It's Just Insufficient

This isn't an argument to eliminate code coverage. Unit tests and coverage metrics have real value for catching regressions in business logic, verifying edge cases in pure functions, and providing fast feedback during development.

The problem is using coverage% as a proxy for product quality. It isn't one.

Coverage% answers: "how much of the code we wrote is tested?" Requirements-verified% answers: "how much of the product we promised is working?"

For engineering teams that ship to users, the second question is the one that matters. Coverage% is an internal implementation metric. Requirements-verified% is a product quality metric.

Use both. But if you're going to report one number that tells you whether your product is working, report the one that's actually about whether your product is working.

Getting Started

To shift from coverage-centric to requirements-centric quality measurement:

# Install HelpMeTest

curl -fsSL https://helpmetest.com/install <span class="hljs-pipe">| bash

helpmetest login

<span class="hljs-comment"># Connect to your AI coding tool

helpmetest install mcp --claude HELP-your-tokenThen work through the PRD to Test Harness workflow: list your requirements, generate checks, deploy the harness. Within an afternoon you'll have a requirements-verified% for your core features — and a dashboard that updates every 5 minutes.

Coverage% will still exist. It will still be useful for what it measures. But it will no longer be the number you rely on to know if your product is actually working.