What Happens When Your AI Coding Agent Skips a Requirement

AI coding agents don't fail loudly. They succeed partially. They implement what you described in the session, miss what you forgot to mention, and ship — with tests passing. The result: requirements live in a PRD, code ships without them, users find the gap. The fix is continuous requirement verification that runs independently of whether a developer remembered to test something.

Key Takeaways

Partial implementation looks like complete implementation. Your AI agent won't tell you it missed a requirement. It will implement what it understood and stop. All tests pass. The feature looks done.

Silent failures compound over time. The longer a missing requirement goes unchecked, the more code builds on top of it. What starts as a missed edge case becomes an architectural assumption everyone relies on.

AI agents have no persistent memory across sessions. What was discussed in a previous session, or written in a requirements doc the agent never saw, does not exist as far as the agent is concerned.

Requirements verification must be independent of the developer. If catching a gap depends on someone remembering to write a test, the gap will get missed. The check must come from the requirement, not from memory.

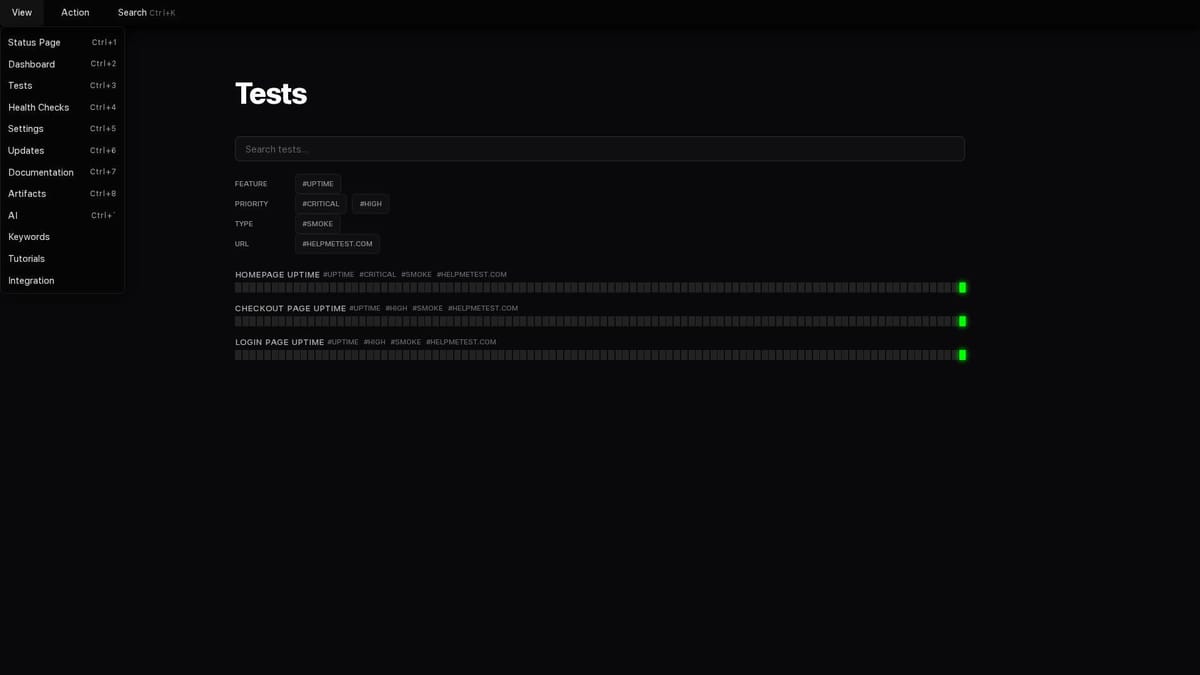

HelpMeTest runs requirement checks continuously. Not once in CI. Every 5 minutes, against production. If a requirement breaks — now or three months from now — you find out before users do.

The Feature Is "Done"

Your AI coding agent just finished a feature. You described the requirements, it wrote the code, ran the tests, committed. Everything is green. You ship.

A week later, a user reports that their shopping cart empties when they refresh the page.

You look at the code. The add-to-cart functionality is correct. The cart rendering is correct. The test for "add item to cart" passes. But cart persistence across page refreshes — a requirement that was clearly in the original spec — was never implemented.

The agent didn't break it. It never built it. And because no test ever checked for it, nothing caught the gap.

This is what silent failure looks like in an AI-assisted development workflow.

Why AI Agents Miss Requirements

To understand why this keeps happening, you need to understand how AI coding agents actually work.

When you tell an AI coding agent to implement a feature, it works from the context in that session. It sees: your message, the files you've shared or that it has access to, any prior conversation in the window. It implements based on what it can see and infer.

What it does not do:

- Cross-reference its implementation against a requirements document it hasn't been shown

- Track which requirements from a multi-page PRD it's covered vs. missed

- Remember requirements from a prior session when you start a new one

- Flag that it's not sure whether it handled edge case X from requirement Y

It implements what it understood from what it received. Nothing more.

This produces a characteristic failure pattern:

Requirements doc (4 items):

1. Users can add items to cart

2. Cart persists across page refreshes

3. Out-of-stock items cannot be added

4. Cart count appears in site header

Session context shown to agent: brief message, one code file

Agent implements: #1 (add to cart), #4 (header count)

Agent never sees: #2 and #3 were in the PRD you forgot to paste

Tests written: covers #1 and #4

Tests covering #2: 0

Tests covering #3: 0

Result: Feature ships, two requirements broken, all tests greenThe agent didn't fail. It succeeded at exactly what it was given. The failure was structural: the requirements never made it into the verification loop.

The Three Ways Requirements Get Skipped

This isn't one problem — it's three related ones, each with the same result.

1. Requirements that were never shown to the agent

The most common case. Your PRD is 30 pages. You paste a summary into the chat. The agent implements the summary. The items you didn't include in the summary don't get implemented.

You didn't notice because you were focused on the items you asked about. The agent didn't flag the gaps because it doesn't know the full document exists.

2. Requirements that the agent de-scoped silently

You describe a feature with complex edge cases. The agent implements the happy path cleanly and leaves the edge cases unimplemented — or partially implemented — without telling you. The description you gave was ambiguous, the agent made a judgment call, and the judgment was wrong.

No error. No warning. Just missing behavior that looked like complete behavior.

3. Requirements that broke after the fact

The agent implemented everything correctly. Tests passed. Then, two weeks later, a database migration changed something, or a third-party API updated its response format, or a configuration drift happened in production. A requirement that was working is now broken.

There was never a check that ran against production. CI ran against a snapshot. The real thing failed silently.

What Tests Catch vs. What They Miss

The frustrating part is that your test suite can be completely green while requirements are broken. This isn't a testing failure — it's a structural limitation of how tests are written.

Tests protect what someone remembered to test. If a requirement never had a test written for it, the test suite has no opinion about whether that requirement works. It simply doesn't check.

Code coverage doesn't help here. You can have 90% code coverage and still miss a requirement entirely — if that requirement's implementation path was never exercised in tests. Coverage measures lines touched, not behaviors verified.

The gap looks like this:

| What tests see | What tests are blind to |

|---|---|

| The functionality that was built | The functionality that was supposed to be built but wasn't |

| Edge cases the developer thought of while coding | Edge cases that exist in the requirements but not in the developer's memory |

| Behavior that broke in this deploy | Behavior that broke silently in production last week |

| Mocked integrations | Live production integrations |

The fundamental issue: tests are written after the fact, from the perspective of the code that exists. Requirements exist before the code. That gap is where silent failures live.

The Fix: Requirement Checks That Run Independently

The solution isn't to write better tests. It's to change where the checks come from.

Instead of deriving tests from code ("let me write tests for what I just built"), derive checks from requirements ("let me verify that everything in the spec is working").

This is the approach described in Requirements as Tests. The key distinction:

- Code-derived tests: what the developer thought to test

- Requirements-derived checks: what the product is supposed to do

For the shopping cart example, a requirements-derived check doesn't come from looking at the implementation. It comes from reading the requirement:

Cart Persists Across Page Refresh

Go To https://shop.example.com/product/widget

Click button[data-testid="add-to-cart"]

Wait For Elements State .cart-count visible timeout=5s

Reload

Get Text .cart-count == 1This check exists because the requirement exists. Not because a developer happened to write a test for it. If the agent never implemented persistence, this check fails immediately. If something breaks persistence later, this check fails when it happens.

The check runs whether or not anyone remembers it needs to run.

How HelpMeTest Catches What Agents Miss

HelpMeTest is designed specifically for this pattern. The workflow:

Step 1: Store your requirements

HelpMeTest's Artifacts system stores your product requirements — PRD excerpts, user stories, acceptance criteria. These become the source of truth for what checks get generated.

Step 2: Generate checks from requirements, not code

When you ask HelpMeTest's AI to generate tests for a feature, it works from the stored requirements. Not from the codebase, not from what it can see was implemented — from what the feature is supposed to do.

If the requirement is "out-of-stock items cannot be added," there's a check for that, regardless of whether the implementation exists.

Step 3: Run before implementation starts

The pattern that catches agent failures most reliably: generate the requirements harness before telling the agent to implement. Run it — it will fail, because nothing is built yet.

Then give the agent the harness as its specification: "implement this feature, the tests tell you when you're done."

Now the agent has a concrete, executable definition of done. It can't finish without all requirements being satisfied, because the tests won't pass until they are.

Step 4: Run continuously against production

CI is not enough. Requirements can break outside of deploys — background jobs fail, third-party APIs change, configuration drifts. HelpMeTest runs your requirement checks every 5 minutes against your production environment.

When a requirement fails — whenever it fails, for whatever reason — you get an alert. You know before users do.

What This Looks Like in Practice

Here's the difference in how a feature ships with and without requirement verification:

Without it:

- Write requirements in a doc

- Describe feature to AI agent

- Agent implements (partially)

- Developer writes tests (for what was implemented)

- CI passes

- Ships

- Users discover missing behavior → support ticket

With requirement verification:

- Write requirements in HelpMeTest Artifacts

- Generate requirement checks (before coding)

- Checks fail (nothing is built yet — this is correct)

- Agent implements against the checks

- Checks go green → feature complete

- Checks continue running against production every 5 minutes

- If anything breaks, alert fires before any user sees it

The second workflow has no step where a requirement can silently go unverified. Every requirement that exists has a check. Every check runs continuously.

Getting Started

# Install HelpMeTest

curl -fsSL https://helpmetest.com/install <span class="hljs-pipe">| bash

helpmetest login

<span class="hljs-comment"># Connect to your AI coding tool

helpmetest install mcp --claude HELP-your-tokenOnce connected, ask your AI agent to read your requirements from HelpMeTest Artifacts and generate a verification harness. Run it before coding starts. Let the failing tests be your specification.

Your AI coding agent is excellent at implementing specified behavior. Give it a complete, executable specification — not just a description — and the gap between requirements and implementation closes.

For the step-by-step workflow for turning a PRD into a test harness, see PRD to Test Harness: A Practical Guide.