Stop Writing Tests. Start Codifying Requirements.

The problem with software testing was never "writing tests is hard." It was always "tests only cover what someone remembered to write." In the AI coding era, this gap gets wider — your coding agent ships faster than any human can track requirements. The fix isn't "AI writes more tests." It's changing the source of truth: start from requirements, not from code. HelpMeTest turns your PRD into a continuous verification harness.

Key Takeaways

Tests have a coverage blindspot. They only verify what someone explicitly wrote a test for. Every requirement that never became a test is a silent breakage waiting to happen.

AI coding agents make this worse, not better. They ship code faster than any human can trace requirements. A feature can be partially implemented — or completely broken — with all tests passing.

The fix is requirements-as-harnesses. Derive your test harness from your product requirements, not from your memory of what you coded. If the requirement exists, the check exists.

HelpMeTest runs it continuously. Not just in CI. Every 5 minutes, against your production environment. You find out when requirements fail — not when users do.

This is the AI-native testing workflow. AI reads your PRD, generates behavior checks, HelpMeTest runs them forever. You write certainty, not test scripts.

The Real Problem with Test Coverage

Everyone talks about test coverage like it's a percentage problem. Get to 80% and you're safe. Get to 95% and you're very safe.

This is wrong.

Test coverage measures how much of your code the tests touch. It says nothing about whether your tests actually cover your requirements. You can have 100% code coverage and still ship a broken checkout flow — if nobody wrote a test for the specific edge case your users hit.

The actual coverage problem is simpler and harder to measure: tests only cover what someone remembered to write.

Every requirement that existed in a design document but never made it into a test file is an invisible risk. It might work today. It might break tomorrow. You won't know until a user tells you.

This was always true. In the AI coding era, it's gotten worse.

What AI Coding Did to the Problem

Before AI coding tools, there was a natural (inefficient) forcing function: writing code took time. Developers would sit with a feature long enough to have it in their head. When they wrote tests, they had context. They'd think "oh right, this also needs to handle the empty state" and write that test.

Slow, but it caught things.

With AI coding agents, the loop is: describe requirement → agent writes code → done. The agent that wrote the code has no persistent memory of what it was supposed to accomplish. It implements what you described in that session. If your description missed an edge case, the code misses it. If your description missed a requirement entirely, the code is silent on it.

And if no test ever checks that requirement, nobody knows.

Here's what a typical AI coding workflow looks like today:

PRD:

- Users can add items to cart

- Cart persists across page refreshes

- Out-of-stock items cannot be added

- Cart shows item count in header

Coding agent implements: add to cart ✅, header count ✅

Misses: persistence across refresh, out-of-stock validation

Tests written: "add item works", "header count updates"

Tests covering persistence: 0

Tests covering out-of-stock: 0

Result: Two requirements broken, all tests greenThis is not a hypothetical. It happens constantly. The agent isn't broken — it just never verified its own coverage against the original requirements.

Tests Derived from Code vs. Tests Derived from Requirements

There are two fundamentally different ways to think about where tests come from.

Tests derived from code (the current default): You look at what you built and write tests that exercise it. You test the happy path you just coded. You test the edge cases you thought of while coding. You miss the requirements you didn't code yet, or coded wrong.

Tests derived from requirements (the shift): You start from what you're supposed to build. You turn each requirement into a check. You run those checks continuously. If the requirement exists in the spec, it gets checked.

The second approach isn't new — it's been the theory behind BDD and acceptance testing for 20 years. What's new is that AI makes it practical. You don't have to manually translate Gherkin scenarios into Playwright scripts. You describe what your product should do, and the AI generates the verification harness.

What's also new is that running those checks continuously — against production, not just in CI — is now a single-command setup rather than a platform engineering project.

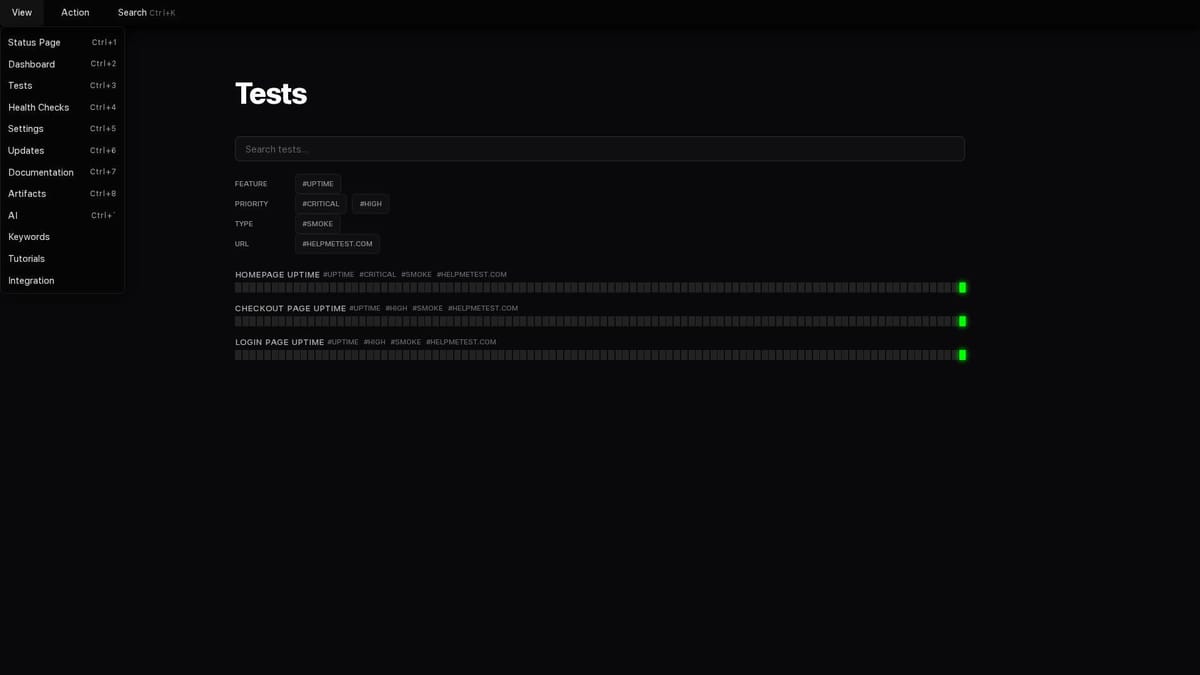

How HelpMeTest Implements This

HelpMeTest is built around a simple model: describe what your product should do, run those checks continuously, get notified when something breaks.

The workflow looks like this:

Step 1: Your requirements live in HelpMeTest as Artifacts

The Artifacts system in HelpMeTest is designed exactly for this. You store your product requirements — in whatever form they exist: PRD excerpts, user stories, acceptance criteria, design specs. The AI uses these as context when generating tests.

Step 2: AI generates the harness from requirements, not from code

When you ask HelpMeTest to generate tests for a feature, it starts from the requirements you've stored, not from the codebase. The output is a set of Robot Framework checks that verify each requirement.

For the cart example above, a requirements-driven harness looks like:

*** Test Cases ***

Cart Persists Across Refresh

Go To https://shop.example.com/product/widget

Click button[data-testid="add-to-cart"]

Wait For Elements State .cart-count visible timeout=5s

Reload

Get Text .cart-count == 1

Out Of Stock Items Cannot Be Added

Go To https://shop.example.com/product/out-of-stock-item

Wait For Elements State button[data-testid="add-to-cart"] visible timeout=5s

${disabled}= Get Attribute button[data-testid="add-to-cart"] disabled

Should Not Be Empty ${disabled}

Cart Count Updates In Header

Go To https://shop.example.com

Click nav a[href="/cart"]

${initial_count}= Get Text .cart-header-count

Go To https://shop.example.com/product/widget

Click button[data-testid="add-to-cart"]

${new_count}= Get Text .cart-header-count

Should Not Be Equal ${initial_count} ${new_count}These checks come from the requirements. Not from looking at what code exists. If the AI coding agent forgot to implement persistence, the second test fails immediately.

Step 3: HelpMeTest runs these checks continuously

This is the part that matters. It's not enough to run these in CI. CI runs when you push. Requirements can break at any time: a database migration, a third-party API change, a configuration drift in production.

HelpMeTest runs your requirements harness every 5 minutes against your production environment. Not your staging environment. The real thing, where your users are.

When a requirement fails, you get an alert. You know before your users do.

The Safety Net for AI-Coded Features

The most practical value of requirements-as-harnesses shows up when you're using AI coding agents to build new features.

Here's the pattern that works:

- Write your requirements (in HelpMeTest Artifacts, in a CLAUDE.md file, in a PRD — wherever)

- Before coding starts, generate the requirements harness from those requirements

- Run the harness — it fails, because nothing is built yet. That's correct.

- Tell your coding agent: "implement this feature. The tests tell you when you're done."

- Agent implements. Tests go green. You're done.

The harness acts as the specification. The agent codes against it. You never have a situation where "the agent thought it was done but missed requirement B" — because requirement B has a test that will fail.

This is the pattern described in HelpMeTest's TDD skill: write all tests before any implementation. The difference now is that "write tests" means "derive checks from requirements" — not "think of what to test while writing code."

Requirements HelpMeTest Catches That Traditional Tests Don't

Here's the gap requirements-driven testing closes in practice:

| Scenario | Traditional tests | Requirements harness |

|---|---|---|

| Coding agent implements 3 of 5 requirements | Green (3 tests pass, 2 never written) | Red (2 checks fail immediately) |

| UI refactor breaks behavior but not structure | Green (DOM selectors updated in test) | Red (behavior assertion fails) |

| Background job stops running silently | Green (no test for job effects) | Red (data freshness check fails) |

| Third-party API response format changes | Green (mocked in tests) | Red (live integration check fails) |

| Feature works but non-functional req fails (e.g., response time) | Green (no perf test) | Red (timing assertion fails) |

The pattern: traditional tests protect the code you wrote tests for. Requirements harnesses protect the product you're supposed to have built.

Health Checks as Requirement Verification

One often-overlooked part of this model: not every requirement is a test.

Some requirements are operational: "the service is available", "the API responds within 2 seconds", "background processing completes within 5 minutes."

HelpMeTest's health monitoring handles these. You use it like this:

# In your deployment pipeline — this heartbeat tells HelpMeTest

<span class="hljs-comment"># "the checkout service just ran successfully, expect the next one in 5 minutes"

helpmetest health <span class="hljs-string">"checkout-processor" <span class="hljs-string">"5m"

<span class="hljs-comment"># In your API — reports that the API is alive with a 30s grace period

helpmetest health <span class="hljs-string">"payment-api" <span class="hljs-string">"30s"If the heartbeat stops arriving, HelpMeTest alerts you. This is a requirement expressed as an operational check: "checkout processing should run every 5 minutes." If it doesn't, something's wrong — and you find out in 5 minutes, not when a customer calls.

Together, behavioral tests and health checks give you complete requirement coverage: user-facing behavior AND operational behavior.

What This Changes Day-to-Day

The mental model shift matters practically:

Before: "Let's write some tests for this feature." → Test debt accumulates. Coverage is an afterthought. Tests only protect code that happened to get tested.

After: "Let's codify what this feature is supposed to do." → Requirements become the first artifact. The harness runs from day one. Coverage is structural, not accidental.

For teams using AI coding agents, this is the difference between shipping fast and shipping correctly. Agents are excellent at implementing specified behavior. They struggle with requirements that never made it into the specification they were given.

A continuously-running requirements harness makes the gap visible immediately — before it reaches production, before a user notices, before it becomes a support ticket.

Getting Started

The workflow:

# Install HelpMeTest CLI

curl -fsSL https://helpmetest.com/install <span class="hljs-pipe">| bash

helpmetest login

<span class="hljs-comment"># Connect your AI coding tool

helpmetest install mcp --claude HELP-your-tokenOnce connected, your AI coding agent can:

- Read your requirements from HelpMeTest Artifacts

- Generate a requirements harness

- Run checks against your production environment

- Report failures back in the same context where you're coding

The loop closes: requirements → harness → continuous verification → instant feedback when something breaks.

You stop writing tests. You start writing certainty.