HelpMeTest vs Coding Agents: What's Actually Different

Coding agents are good at generating test scripts. They're not a testing platform. If your tests only run when you ask them to, and break every time the UI changes, you don't have a testing system — you have a collection of scripts. HelpMeTest is the infrastructure that makes those scripts actually work, continuously, without you babysitting them.

Key Takeaways

Coding agents generate. HelpMeTest executes. A Playwright script your agent wrote isn't running right now. HelpMeTest's tests are.

Tests break when UI changes. HelpMeTest fixes them automatically. Self-healing means you don't get paged to re-prompt your agent at 2am.

No browser infrastructure. Coding agents have no cloud browser grid. HelpMeTest does — parallel execution, screenshots, session replay, visual checks.

Production monitoring isn't code generation. 24/7 health checks, grace periods, Slack alerts — none of that is something your coding agent does.

They work together. HelpMeTest has an MCP server. Your coding agent can create, run, and read test results without leaving its environment.

The Real Problem with Coding Agent Tests

Ask Claude, Cursor, or Copilot to write an end-to-end test and you'll get something that works. A Playwright script, a Robot Framework file, maybe a Cypress spec. It looks right. You run it once. It passes.

Then you deploy a new feature two weeks later and it fails. The button selector changed. The page structure shifted. The test was never updated. It sits broken in your repo while your production app is doing whatever it wants.

This isn't a problem with the coding agent. It's a category error. Coding agents are code generation tools. Testing is an ongoing operation — something that has to run continuously, recover from change, and alert you when real things break.

Those are not the same job.

What Coding Agents Actually Do

| Task | Coding Agent |

|---|---|

| Generate a test script from a description | ✅ |

| Write assertions based on your requirements | ✅ |

| Fix a broken test when you paste in the error | ✅ |

| Run tests continuously while you sleep | ❌ |

| Self-heal when UI elements change | ❌ |

| Alert you when production breaks at 3am | ❌ |

| Maintain a browser grid for parallel execution | ❌ |

| Track test history and failure trends | ❌ |

| Monitor server health with grace periods | ❌ |

| Capture session replays when things fail | ❌ |

A coding agent is reactive. It does something when you ask it to. Testing infrastructure is proactive — it runs regardless of what you're doing, finds problems before your users do, and tells you about them.

What Breaks Without a Testing Platform

Problem 1: Tests only run when triggered

A Playwright script on your local machine doesn't run at 2am when your database connection pool maxes out. It doesn't run after your teammate's PR merges. It doesn't run during the holiday week when no one's watching.

HelpMeTest runs every 5 minutes on a cloud browser grid. You don't have to think about it.

Problem 2: UI changes break scripts with no warning

Your coding agent wrote a test targeting .submit-btn. Someone renamed it button[data-testid="submit"]. The test fails silently in CI for three weeks before anyone notices.

HelpMeTest's self-healing engine detects when a selector stops working and finds the new one automatically. You get a notification that the test was updated, not a page that something's down.

Problem 3: No browser infrastructure

Running Playwright at scale means browser farms, parallel workers, screenshot storage, video capture. That's infrastructure you don't want to manage.

HelpMeTest provides a managed cloud browser grid. Tests run in parallel, screenshots and session replays are captured automatically, and you see a timeline of what happened — not just a pass/fail.

Problem 4: You're the glue

With a coding agent, you are the monitoring system. You notice the test hasn't run. You paste the error into the agent. You ask it to fix the selector. You run it again. You check if it passes.

That loop is a part-time job if you have more than 10 tests.

Problem 5: Production monitoring is a different beast

End-to-end tests catch regressions. Health checks catch outages. A system that pings your /health endpoint every 5 minutes and alerts Slack when it fails for more than 30 seconds — that's not something you generate with a coding agent. That's a system you configure once and rely on.

helpmetest health "production-api" "5m"One command. If the heartbeat stops for 5 minutes, you get alerted. Your server's CPU, memory, hostname, and IP are logged automatically. You see a timeline.

How HelpMeTest Is Different

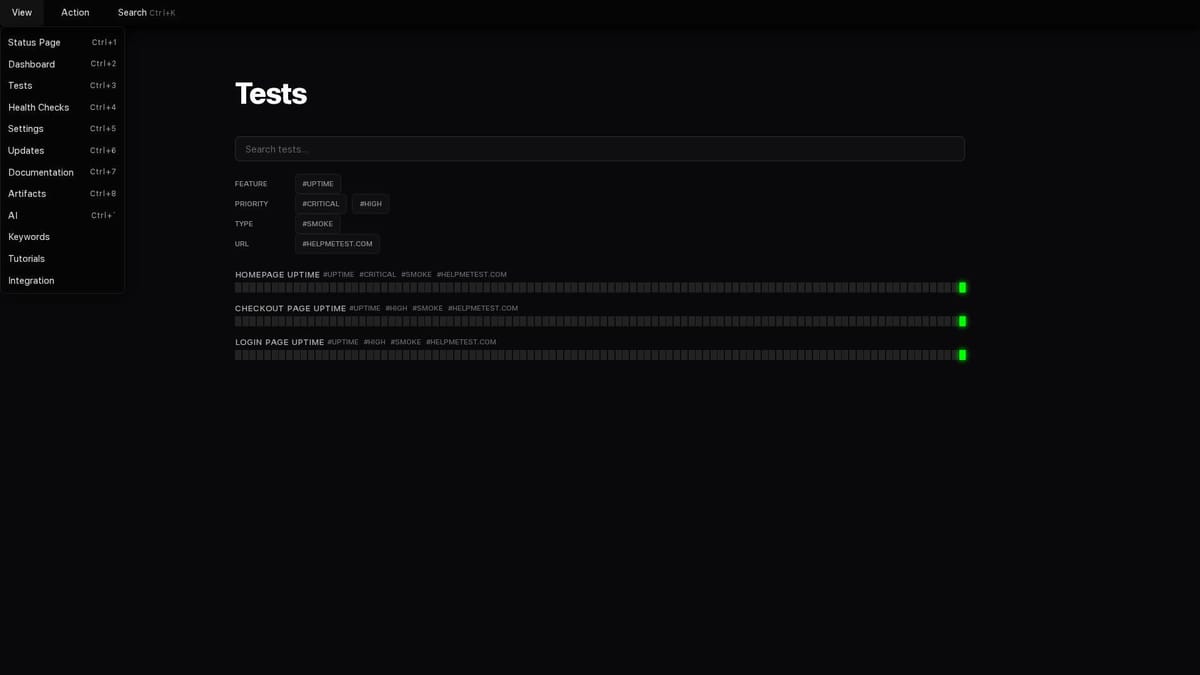

It's a platform, not a script

Your tests live in the cloud. They run on a schedule. Results are stored with history, screenshots, and failure context. When a test fails, you see a full session replay of what happened — not just an error message.

Self-healing that actually works

When the DOM changes, HelpMeTest's AI finds the new selector using runtime context: browser traces, DOM snapshots, network logs. Not just a re-prompt. It looks at what the browser actually saw and figures out what changed.

Browser state you don't manage

Logging in before every test is slow and fragile. HelpMeTest persists browser state across tests. Log in once with Save As Admin, then every test that needs authentication just starts with As Admin. No re-login. No flakiness from auth flows.

# Set up once

Go To https://app.example.com/login

Fill Text input[name="email"] user@example.com

Fill Text input[name="password"] s3cr3t

Click button[type="submit"]

Save As Admin

# Every test that needs auth

As Admin

Go To https://app.example.com/billingHealth monitoring with grace periods

Some failures are expected to resolve themselves. A deployment takes 30 seconds. A cron job runs every hour. You don't want alerts for transient blips.

HelpMeTest health checks have configurable grace periods. A database backup that takes 2 hours gets a 2-hour grace period. If it fails to report back within that window, then you get alerted.

helpmetest health "nightly-backup" "2h"Visual testing built in

Screenshots aren't just for debugging. HelpMeTest compares page snapshots across viewports — mobile, tablet, desktop — and flags visual regressions with a similarity score. Your coding agent can write a test. It cannot tell you if the layout looks broken on an iPhone 14.

How They Work Together

This isn't an either-or. HelpMeTest ships an MCP server that connects directly to Claude Code, Cursor, and other AI editors. Your coding agent gets tools to:

- Create tests in natural language

- Run tests and read results

- Check health check status

- Get test history and failure patterns

helpmetest install mcp --claude HELP-your-tokenAfter that, your agent can say "run the smoke test suite" or "create a test for the checkout flow" and HelpMeTest does the execution. The agent writes, HelpMeTest runs. That's the intended workflow.

The Practical Comparison

Here's what the same workflow looks like with and without a testing platform:

With a coding agent only:

- You ask the agent to write a login test

- Agent writes a Playwright script

- You run it locally — passes

- Three weeks later, login breaks in production

- You find out from a user report

- You paste the error into the agent

- Agent fixes the script

- Repeat

With HelpMeTest + coding agent:

- You ask the agent to write a login test (or HelpMeTest generates it)

- Test runs in the cloud every 5 minutes

- Three weeks later, the UI changes and the selector breaks

- HelpMeTest self-heals the test automatically

- You get a Slack notification: "Test updated due to DOM change"

- Login breaks in production → health check misses → you're alerted within 5 minutes

- You get a session replay showing exactly what the browser saw

The difference is who's watching between the moments you're paying attention.

Pricing Reality

A coding agent doesn't cost extra for testing — you're already paying for Claude Code or Copilot. But you're also spending time: debugging broken tests, re-prompting agents, manually checking if things still work.

HelpMeTest Free gives you 10 tests and unlimited health checks at $0. HelpMeTest Pro is $100/month for unlimited tests with parallel execution.

That's not a replacement for your coding agent. It's the infrastructure that makes the tests your coding agent writes actually useful.

Bottom Line

Coding agents are remarkably good at generating test code. They're not monitoring systems. They're not browser infrastructure. They don't heal themselves, run on a schedule, or alert you at 3am.

HelpMeTest is the platform that makes AI-generated tests into a real QA system. The two are better together: the agent writes, HelpMeTest runs. You review results, not process.