Exploratory Testing Guide: Techniques, Charters, and Session-Based Testing

Your scripted tests cover every scenario you thought of when you wrote them. Exploratory testing finds the scenarios you didn't think of — the ones users will discover on day one. It's not unstructured chaos; it's skilled investigation.

Key Takeaways

Exploratory testing finds bugs that scripted tests cannot. Unexpected interactions, edge cases no one considered, usability problems hidden in technically correct code — these require human curiosity.

Session-based test management (SBTM) gives exploratory testing structure without scripts. Time-boxed sessions with clear charters produce repeatable, documentable results.

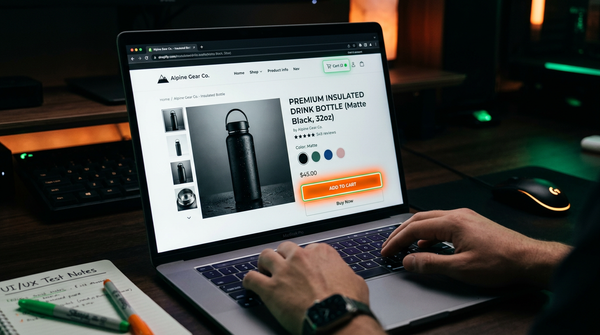

Test charters define the mission, not the steps. "Explore the checkout flow focusing on coupon code behavior" gives testers direction while leaving room for discovery.

Exploratory testing in Agile works best as a complement to automation, not a replacement. Automate the known, explore the unknown — every sprint.

Exploratory testing is simultaneous learning, test design, and test execution. Instead of following a pre-written script, the tester investigates the software, forms hypotheses about how it might fail, and immediately tests those hypotheses — all in the same session.

The term was coined by Cem Kaner in 1984 and later formalized by James Bach and Michael Bolton. It represents a fundamental shift in how we think about testing: from "executing a checklist" to "applying skill and judgment to find problems that matter."

Exploratory testing does not replace scripted testing. It finds the bugs that scripted tests cannot — the unexpected interactions, the edge cases no one thought of, the usability problems hidden in technically correct code.

What Is Exploratory Testing?

Exploratory testing is a testing approach where the tester simultaneously designs and executes tests, using what they learn from the software to guide subsequent testing decisions.

James Bach's definition: "Exploratory testing is simultaneous learning, test design, and test execution."

The key word is simultaneous. In scripted testing, test design and test execution are separate phases. In exploratory testing, they happen at the same time, with each executed test informing the next.

What Makes It Exploratory

A test is exploratory when the tester:

- Uses information discovered during testing to decide what to test next

- Designs new tests on the fly in response to what they observe

- Applies judgment about where the risks are highest

- Goes off-script when they encounter something unexpected

A test is not exploratory when:

- Every step is pre-defined before the session begins

- The tester follows a script and ignores unexpected behavior

- The focus is on confirming known behavior rather than discovering unknown behavior

The Mental Model

Think of exploratory testing as a feedback loop:

Observe → Hypothesize → Test → Learn → Observe → ...You look at the software, form a hypothesis about how it might fail, test that hypothesis, learn something new, and use that knowledge to form the next hypothesis. The session is a rapid series of these cycles.

Exploratory vs Scripted Testing

Both have their place. Understanding the difference clarifies when to use each.

Scripted Testing

In scripted testing, test cases are designed in advance. Each test has defined steps, inputs, and expected outputs. The tester's job is to execute these tests faithfully.

Strengths:

- Reproducible — the same test runs the same way every time

- Measurable — you know exactly what was tested and what passed

- Auditable — required for regulatory compliance

- Automatable — scripted tests can be automated

Weaknesses:

- Tests only what was anticipated during design

- Misses unexpected interactions and emergent bugs

- Becomes stale as software evolves

- Requires significant upfront effort before testing can begin

- Testers follow scripts even when they observe suspicious behavior

Exploratory Testing

Strengths:

- Discovers bugs scripted tests miss — unexpected interactions, edge cases, usability issues

- Adapts to new information in real time

- Requires minimal setup time — start testing immediately

- Exercises human creativity, intuition, and domain knowledge

- Effective when requirements are unclear, incomplete, or changing

Weaknesses:

- Less reproducible — two testers exploring the same system may test very different things

- Coverage is harder to measure

- Quality depends heavily on the tester's skill and domain knowledge

- Cannot easily be automated

The Right Mix

Most mature QA practices use both:

| Activity | Scripted | Exploratory |

|---|---|---|

| Regression testing | ✓ | |

| Compliance testing | ✓ | |

| Initial feature exploration | ✓ | |

| Bug investigation | ✓ | |

| Usability evaluation | ✓ | |

| Integration testing | Both | Both |

| New/unknown features | ✓ | |

| Pre-release final check | Both | Both |

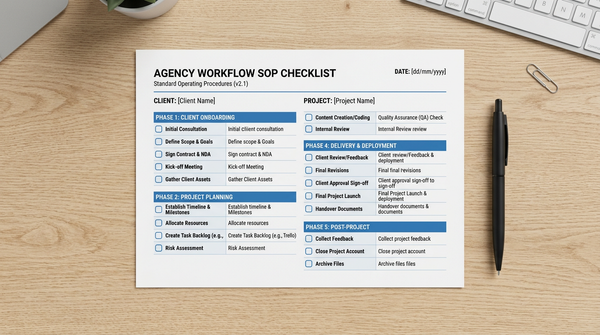

Session-Based Test Management (SBTM)

James Bach developed Session-Based Test Management to solve the main critique of exploratory testing: that it is unaccountable and unmeasurable.

SBTM provides structure through time-boxed sessions and test charters — without constraining what the tester can explore within those sessions.

The SBTM Structure

Session = Charter + Time Box + Debrief

Charter: The mission for this session ("What to test and why")

Time Box: 60-120 minute focused testing block

Debrief: Post-session review of findings, coverage, and issuesSession Sheet

Each session is documented in a session sheet:

Session: Login and authentication flows

Charter: Explore the login form to find security and usability issues

Tester: Sarah Chen

Date: 2026-03-12

Duration: 90 minutes

Areas covered:

- Login with valid credentials

- Login with invalid credentials (various combinations)

- Password field behavior (copy-paste, autocomplete)

- Forgot password flow

- Account lockout behavior

- Remember me functionality

- OAuth login (Google, GitHub)

Bugs found:

- BUG-001: Account lockout message reveals whether email is registered (info disclosure)

- BUG-002: Remember me token does not expire after password change

- BUG-003: OAuth login bypasses 2FA requirement

Issues / Questions:

- Unclear: After 5 failed attempts, does lockout apply to the email or the IP?

- Missing: No CAPTCHA on login, only lockout

Notes:

- Tested on Chrome 124, Mac OS

- Did not test: Mobile browsers, SSO, API token loginSession Metrics

SBTM enables measurement through session sheets:

- Sessions completed vs planned

- Time on testing vs overhead (setup, debriefs)

- Bug discovery rate per session

- Coverage areas (charters completed)

Writing Test Charters

A charter is the mission statement for an exploratory testing session. It defines what to test and what to look for — but not how to test.

Charter Format

The classic format: "Explore [target] with [resources/techniques] to find [information/bugs/risks]"

Examples:

Explore the checkout flow with a guest user account

to find usability barriers and error handling gaps.

Explore the search functionality with special characters and Unicode input

to find injection vulnerabilities and display bugs.

Explore the API rate limiting behavior under concurrent requests

to find race conditions and incorrect enforcement.

Explore the file upload feature with oversized files and unusual file types

to find security bypass opportunities and error handling weaknesses.Charter Design Principles

Be specific enough to focus the session — "explore the application" is too broad. A good charter can be completed in 60-90 minutes.

Be open enough to allow discovery — the charter should not prescribe every step. Leave room for the tester's judgment.

Focus on risk — charters should target areas of high risk: recently changed code, complex interactions, boundary conditions, security-sensitive operations.

Define what you're looking for — "to find" clause guides the tester's judgment about what counts as a finding worth reporting.

Charter Categories

Feature exploration: Understand how a new or unfamiliar feature works.

Explore the new notifications system to understand all trigger conditions

and find cases where notifications are missing or duplicated.Risk-based: Target high-risk areas based on complexity, recent changes, or past bug density.

Explore the payment processing code changes from this sprint

to find regression bugs and edge cases in the new retry logic.User journey: Follow realistic user workflows to find integration failures.

Explore the complete onboarding flow for a new user

to find friction points, errors, and data consistency issues.Boundary testing: Focus on limits and edge cases.

Explore the data import feature with various file sizes, encoding types,

and malformed data to find graceful error handling failures.Exploratory Testing Techniques

Experienced exploratory testers apply specific mental models and techniques to guide their investigation.

1. Boundary Value Exploration

Push inputs to their limits:

- Minimum and maximum field lengths

- Zero, negative numbers, very large numbers

- Empty inputs vs whitespace-only inputs

- Valid format, wrong value (e.g., future date in a birthdate field)

- Exactly at the limit, one above, one below

2. Tour-Based Testing

Jonathan Kohl and James Whittaker developed software "tours" as mental models for exploration:

The Money Tour: Follow the paths most important to the business (e.g., purchasing flow, subscription signup).

The Landmark Tour: Navigate from one significant feature to another, like a tourist visiting landmarks.

The Garbage Collector Tour: Look for orphaned features, dead links, and abandoned functionality that still exists in the UI.

The Antisocial Tour: Try every path that other users would avoid (canceling in the middle of a form, going back when you should go forward).

The Supermodel Tour: Focus entirely on appearance — visual bugs, layout issues, inconsistent terminology.

3. Error Guessing

Apply past experience to guess where bugs are most likely to be:

- "In my experience, file upload features often have encoding issues with special characters in filenames"

- "This pagination code looks like the kind that has off-by-one errors at the last page"

- "New developers often forget to validate inputs on the API layer even when the UI validates"

Error guessing is not random — it is pattern matching based on experience with similar software and common bug types.

4. State-Based Exploration

Test behavior that depends on system state:

- What happens when you perform an action in an unexpected order?

- What state persists between sessions (cookies, local storage, server-side)?

- Can you reach an operation that requires a precondition without meeting that precondition?

- What happens when state is corrupted or stale?

Example: Shopping cart exploration

- Add item, then delete account. What happens to the cart?

- Add item in one browser tab, check it from another tab

- Add item, let session expire, return and checkout

- Add item, change its price in admin, attempt checkout5. Pair Testing

Two testers working together on the same session:

- Navigator-Driver: One tester drives (executes), the other navigates (decides what to test next)

- Collaborators: Both testers test independently and compare observations

- Expert-Novice: Experienced tester with a domain expert or new team member

Pair testing surfaces different perspectives. The domain expert knows what matters to the business; the tester knows where systems typically fail.

6. Attack-Based Testing

Think like an adversary:

- What would I try if I were trying to steal data?

- How would I bypass authorization?

- Where can I inject malicious input?

- What could I do with normal user permissions that I should not be able to do?

Attack-based exploration is effectively informal security testing. For formal security testing, see dedicated penetration testing methodology.

7. Coverage-Guided Exploration

Use risk analysis and coverage mapping to avoid biases:

- Which features have the most user traffic?

- Which areas have had the most bug reports historically?

- Which components were recently changed?

- Which integrations involve the most complex data flows?

Map your session charters to these risk areas to ensure systematic coverage.

When to Use Exploratory Testing

Ideal Scenarios

Early feature development: When requirements are still being understood. Exploratory testing produces both bugs and insights that improve the specification.

New or unfamiliar codebases: When the team is learning a system for the first time. Exploration builds understanding faster than scripted testing.

High-complexity features: Complex business rules, multi-step workflows, integration-heavy features where emergent behavior is hard to predict.

Before scripted test creation: Explore first to understand the feature, then write targeted scripted/automated tests based on what you learned.

Security and usability review: These dimensions are hard to test with scripts because what "correct" looks like requires human judgment.

After major refactoring: Even if unit tests pass, refactoring can introduce subtle behavioral changes that only exploration reveals.

Sprint demos and release candidates: A focused exploratory session on new features before release catches the issues automated regression cannot.

Less Ideal Scenarios

Regression testing: Once you know exactly what to verify, scripted and automated tests are more efficient and repeatable.

Compliance testing: Requires documented evidence of specific test execution. Scripted tests with evidence capture are required.

Volume/load testing: Requires systematic data generation and measurement, not exploration.

Reporting and Documentation

Exploratory testing often gets criticized as "unaccountable." Good documentation addresses this.

During the Session: Note-Taking

Take notes continuously during the session — not after. Effective note-taking during exploratory testing:

- Test ideas: What you are about to try

- Observations: What you noticed (including normal behavior)

- Bugs: Detailed enough to reproduce — steps, environment, expected vs actual

- Questions: Ambiguities to investigate later

- Coverage: What areas you explored vs did not explore

Tools: A simple text editor, a mind map, or a dedicated session management tool (like rapid reporter).

Bug Reports from Exploratory Sessions

Exploratory testing discovers bugs, but the bug report should be as precise as a scripted test:

Title: Guest checkout session expires silently after 15 minutes

Environment: Chrome 124, staging environment, guest checkout flow

Steps to Reproduce:

1. Add product to cart as guest user

2. Proceed to checkout, complete step 1 (contact info)

3. Wait 15+ minutes without advancing

4. Click "Continue to shipping"

Actual: Page reloads with empty cart, no error message

Expected: Session expiration warning before data loss, or data preserved

Severity: Major — users lose their cart data without explanationDebrief Reports

After each session, complete a brief debrief:

- What was chartered vs what was actually tested?

- What bugs were found?

- What areas need more testing?

- What did you learn about the system?

- What charters should be created based on this session?

Exploratory Testing in Agile

Exploratory testing fits naturally into agile development. As features are completed each sprint, exploratory sessions validate them before the sprint ends.

Sprint-Based Approach

Sprint planning: Create charters for new features being built in the sprint.

During sprint: As features complete development, run exploratory sessions. Findings feed back into development before the sprint ends.

Sprint review: Share exploratory testing findings as part of the sprint review.

Definition of Done: Consider requiring at least one exploratory session before a story is marked done.

Time Allocation

A typical allocation for a QA engineer in a 2-week sprint:

- 30-40% automated test development

- 30-40% exploratory testing (session-based)

- 20-30% scripted test execution, defect management, regression

Collaboration with Developers

Exploratory testing works best when QA and developers collaborate:

- Developers describe their implementation decisions so QA knows where the complexity is

- QA shares exploratory findings informally (not just as bug reports) to give developers early context

- Pair testing sessions with developer + QA surface deep integration issues

Common Mistakes

1. Treating Exploration as "Testing Without a Plan"

The most common misconception: exploratory testing means clicking around randomly. Without charters, skill, and strategy, exploration produces low-quality results.

Fix: Always start with a charter. Define what you are exploring and what you are looking for before each session.

2. No Documentation During Sessions

Valuable observations are lost if not written down immediately. Post-session documentation is far less accurate.

Fix: Document during the session, not after. Notes during exploration are part of the deliverable.

3. Sessions That Are Too Long

Sessions longer than 90-120 minutes produce diminishing returns due to mental fatigue. Coverage becomes superficial.

Fix: Time-box sessions to 60-90 minutes. Take a break, then start a new session with a new charter.

4. Only Using One Technique

Testers who rely exclusively on one exploration style (e.g., always boundary testing) miss categories of bugs.

Fix: Rotate through different techniques (tours, attack-based, state-based) across sessions.

5. No Debrief

Sessions without debriefs do not improve the testing process. Learnings from one session should inform the next.

Fix: Spend 10-15 minutes after each session in a debrief, even if solo. What did you find? What should be explored next? What did you not cover?

6. Confusing Exploration with Investigation

Exploration is proactive — finding unknown bugs. Investigation is reactive — understanding a known bug. They require different mindsets. Do not mix them in the same session.

Fix: Create separate charters for investigation ("Explore the cause of BUG-123") vs exploration ("Explore the user settings page to find usability issues").

FAQ

What is the difference between exploratory testing and ad hoc testing?

Ad hoc testing is informal testing without any structure or documentation — testing whatever comes to mind, without notes, without charters, without accountability. Exploratory testing is structured: it uses charters to define mission, time-boxed sessions for focus, and session notes for documentation. Both involve creativity and judgment, but exploratory testing is accountable and repeatable; ad hoc testing is not.

Can exploratory testing be automated?

Not fully — automation executes scripted, deterministic tests. Some aspects of exploratory testing can be automated (fuzzing, property-based testing, random input generation), but the human judgment, creativity, and real-time adaptation that define exploratory testing cannot be replicated by scripts. The complementary approach: use automation for scripted regression; use humans for exploration.

How do you measure the effectiveness of exploratory testing?

Key metrics: bugs found per session-hour, bug severity distribution, charter completion rate, areas covered vs planned, time on testing vs overhead, and knowledge gained (questions answered about the system). Compare with scripted testing: if exploratory sessions consistently find bugs that automated/scripted tests miss, the exploratory effort is justified.

What skills make a good exploratory tester?

Curiosity: a genuine interest in understanding how things work and break. Domain knowledge: understanding what matters to the business. Technical breadth: knowing common bug patterns, vulnerability types, and system behaviors. Critical thinking: forming and testing hypotheses systematically. Communication: documenting and articulating findings clearly. Experience: pattern recognition from testing many systems.

How long should an exploratory testing session be?

Optimal session length is 60-90 minutes. Under 45 minutes is usually too short to build sufficient context. Over 120 minutes produces fatigue and diminishing returns. Time-box your sessions and take breaks between them. One focused 90-minute session typically produces more value than one unfocused 3-hour session.

What is session-based testing?

Session-based test management (SBTM) is a framework for managing exploratory testing through time-boxed sessions with charters. Each session has a defined mission (charter), runs for a fixed duration (typically 60-90 minutes), and ends with a debrief that documents findings, coverage, and questions. SBTM was developed by James Bach to make exploratory testing accountable, measurable, and manageable.

When should I use exploratory testing vs automated testing?

Use automated testing for regression — verifying that previously working behavior still works. Use exploratory testing for discovery — finding new bugs, validating new features, and uncovering unexpected behaviors. They are complementary: exploratory sessions often reveal which behaviors are important enough to warrant automated tests. A common pattern: explore first to understand the feature, then automate the critical paths you discovered.