Acceptance Testing and UAT Guide: Process, Examples, and Best Practices

Your QA team passed every test. Your users still found a broken checkout. Acceptance testing is the last gate between your code and your customers — it answers the only question that actually matters: does this software do what the business needs it to do?

Key Takeaways

Acceptance testing validates business requirements, not technical correctness. Unit tests can all pass while the product still fails to do what users expect.

UAT must be performed by real users or business representatives — not developers or QA. The people who wrote the code are the worst people to evaluate whether it solves the right problem.

Acceptance criteria must be defined before development begins. Writing acceptance criteria after the code is done is writing a test you already know passes.

Operational Acceptance Testing (OAT) covers what UAT misses. Backups, disaster recovery, and monitoring need separate validation from user workflows.

Acceptance testing is the final phase of software testing, where the system is evaluated against business requirements to determine whether it meets the criteria for delivery. Unlike unit or integration tests that verify technical correctness, acceptance testing verifies that the software does what stakeholders actually need it to do.

At the heart of acceptance testing is User Acceptance Testing (UAT) — the process where real end users or business representatives validate the software before it goes live. UAT is the last gate before production. If it fails, no amount of passing unit tests will save you.

This guide covers acceptance testing types, the UAT process end-to-end, how to write acceptance criteria, stakeholder roles, real-world UAT examples, and the common mistakes that derail UAT cycles.

What Is Acceptance Testing?

Acceptance testing answers a single question: "Does this software satisfy the agreed requirements?"

It sits at the top of the testing pyramid, after unit tests, integration tests, and system tests have already been run. Acceptance testing is the handshake between the development team and the business — formal verification that the system is ready for deployment.

The term comes from the concept of "acceptance criteria" in contracts. Before a contractor hands over a building, the client inspects it against the agreed specifications. Software acceptance testing works the same way.

What Acceptance Testing Is NOT

- It is not exploratory bug hunting (that is system testing)

- It is not performance benchmarking (that is performance testing)

- It is not security auditing (that is penetration testing)

- It is not "QA doing more testing" — UAT requires business stakeholders, not just QA engineers

Where Acceptance Testing Fits

Unit Tests → Does this function work correctly?

Integration Tests → Do these components work together?

System Tests → Does the whole system work end-to-end?

Acceptance Tests → Does the system meet business requirements?Types of Acceptance Testing

User Acceptance Testing (UAT)

The most common form. End users or business representatives test the system against real-world scenarios. UAT validates business processes, not just features.

Operational Acceptance Testing (OAT)

Also called Production Acceptance Testing. Validates operational readiness — backup/recovery, monitoring, failover, deployment procedures. Typically run by operations or DevOps teams.

Alpha Testing

Conducted by internal teams (developers, internal users) before external release. Often informal, using real workflows but controlled conditions.

Beta Testing

Conducted by a selected group of real external users in a production-like environment. Feedback is used to identify issues before full public release.

Contract Acceptance Testing

Validates software against a formal contract specification. Common in outsourced development, government projects, and enterprise software procurement.

Regulation Acceptance Testing

Verifies compliance with regulatory requirements — GDPR, HIPAA, SOX, PCI-DSS. Requires documented evidence for auditors.

User Acceptance Testing (UAT) Process

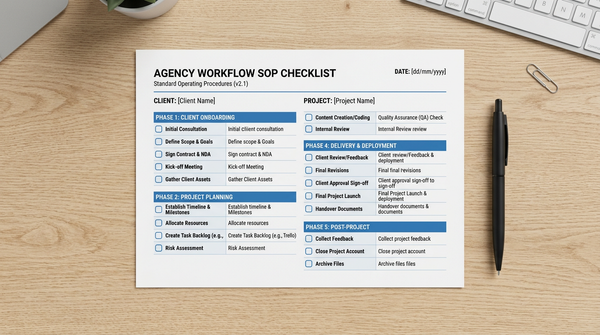

Phase 1: Planning (Before Development Ends)

UAT planning should start early — ideally at project kickoff, not after development is complete.

Activities:

- Define UAT scope and objectives

- Identify UAT stakeholders and assign roles

- Agree on acceptance criteria (see below)

- Plan the UAT environment

- Create a UAT schedule

- Identify test data requirements

Deliverables:

- UAT plan document

- Acceptance criteria list

- UAT schedule and resource allocation

Phase 2: Test Case Design

UAT test cases are written from the user's perspective, based on real business scenarios.

Key principles:

- Write test cases around business workflows, not technical features

- Include both happy paths and edge cases

- Define clear pass/fail criteria for each test case

- Keep language non-technical — business users will execute these

Example test case format:

| Field | Content |

|---|---|

| Test Case ID | UAT-TC-001 |

| Title | Customer places an online order |

| Preconditions | User has an active account; at least 1 item in catalog |

| Steps | 1. Log in 2. Search for "Widget Pro" 3. Add to cart 4. Checkout 5. Select shipping |

| Expected Result | Order confirmation email received; order appears in dashboard |

| Pass/Fail | |

| Notes |

Phase 3: UAT Environment Setup

The UAT environment must closely mirror production. Common failures happen when UAT is done in a development environment with different data, integrations, or configurations.

Requirements:

- Production-equivalent infrastructure

- Sanitized copies of production data (or representative synthetic data)

- All third-party integrations active (payment gateways, email services, APIs)

- Access controls matching production roles

- Monitoring and logging enabled

Phase 4: Test Execution

Business users execute test cases following the documented steps. UAT testers are typically not technical — they need clear instructions and support.

Best practices during execution:

- Hold a UAT kickoff session to orient testers

- Have a technical resource available to answer questions (but not influence results)

- Use a defect tracking system for reporting issues

- Document deviations from expected behavior immediately

- Track completion rate daily

Phase 5: Defect Management

Defects found during UAT must be triaged by priority:

- Critical: Blocks a core business process; must be fixed before go-live

- Major: Significant impact on usability; usually blocks sign-off

- Minor: Cosmetic or low-impact; may be accepted with a fix scheduled post-launch

- Enhancement: Out of scope for current release; logged for backlog

Each defect goes back to development, is fixed, retested in UAT, and either closed or escalated.

Phase 6: UAT Sign-Off

Sign-off is the formal approval that the system meets acceptance criteria. This is typically documented in a sign-off report signed by the business sponsor.

Sign-off report includes:

- Summary of test execution (test cases run, passed, failed)

- List of open defects and their disposition

- Any accepted risks or deferred items

- Business sponsor signature authorizing go-live

Writing Acceptance Criteria

Acceptance criteria define the conditions that a feature must satisfy to be considered complete and acceptable. They are written before development begins — not as an afterthought.

The Importance of "Definition of Done"

Without clear acceptance criteria, "done" means different things to different people. The developer thinks it works because the unit tests pass. The product manager thinks it works because they saw a demo. The customer thinks it does not work because it does not handle their specific workflow.

Acceptance criteria eliminate this ambiguity.

Format 1: Given-When-Then (Gherkin)

Given a registered customer with items in their cart

When they click "Checkout" and complete the payment form

Then an order confirmation email is sent within 2 minutes

And the order appears in their account dashboard

And inventory is decremented by the ordered quantityThis format is precise and testable. Each "Then" clause is a verifiable outcome.

Format 2: Checklist

Simple acceptance criteria can be written as a checklist:

- User can register with email and password

- Registration form validates email format

- Duplicate email addresses are rejected with a specific error message

- Confirmation email is sent after successful registration

- User can log in immediately after registration

Format 3: Specification Table

| Scenario | Input | Expected Output |

|---|---|---|

| Valid login | Correct email + password | Redirect to dashboard |

| Wrong password | Correct email + wrong password | Error: "Invalid credentials" |

| Account locked | 5 failed attempts | Account locked, email sent |

| Forgot password | Valid email | Reset email sent within 1 minute |

Characteristics of Good Acceptance Criteria

Testable: You can write a test case for it. "The system should be user-friendly" is not testable. "The checkout process completes in under 3 clicks" is testable.

Specific: No ambiguous language. "Fast" is not specific. "Page loads in under 2 seconds on a 4G connection" is specific.

Complete: Covers the happy path, error cases, and edge cases.

Agreed upon: Written collaboratively by the product owner, developer, and QA engineer (the "Three Amigos" from BDD).

Verifiable before development: If you cannot verify acceptance criteria before coding starts, rewrite them.

UAT Examples

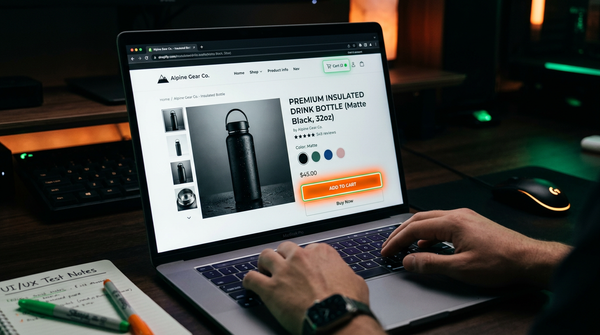

Example 1: E-commerce Checkout

Feature: One-click reorder for returning customers

Acceptance Criteria:

- Returning customers with a saved payment method see a "Reorder" button on past orders

- Clicking "Reorder" adds all previous order items to cart (items still in stock only)

- Out-of-stock items display a notification: "[Item Name] is out of stock and was not added"

- The saved payment method is pre-selected at checkout

- Order confirmation is sent within 2 minutes of placement

UAT Test Scenarios:

- Reorder when all items are in stock

- Reorder when some items are out of stock

- Reorder with an expired saved payment method

- Reorder when cart already has items

Example 2: HR Leave Management System

Feature: Employee submits a leave request

Acceptance Criteria:

- Employee can select leave type (annual, sick, parental, unpaid)

- System validates that leave balance is sufficient before submission

- Request is routed to direct manager for approval

- Manager receives email notification within 5 minutes

- Employee sees pending status in their leave dashboard

- Approved requests automatically update the team calendar

UAT Roles:

- Employee (submitter): Tests submission flow

- Manager: Tests approval flow

- HR admin: Tests reporting and override capabilities

Example 3: Banking Transaction

Feature: International wire transfer

Acceptance Criteria:

- User can initiate a transfer to any SWIFT-supported country

- Transfer requires two-factor authentication

- Fee is displayed before confirmation, not after

- Exchange rate is locked at time of submission for 30 minutes

- Transfer appears in transaction history as "Pending" within 1 minute

- Confirmation SMS sent to registered mobile number

- Transfer fails gracefully if daily limit is exceeded, with a clear error message

Example 4: SaaS Onboarding Flow

Feature: New user onboarding wizard

Acceptance Criteria:

- New users are shown a 4-step onboarding wizard after first login

- Each step has a clear completion indicator

- Users can skip individual steps (but not the account setup step)

- Completion of all steps triggers a welcome email with next steps

- Onboarding progress is saved if the user closes the browser and returns

- Users who complete onboarding within 24 hours see the "Early Bird" achievement badge

Operational Acceptance Testing (OAT)

While UAT validates business functionality, Operational Acceptance Testing (OAT) validates that the system can be operated, maintained, and supported in production.

OAT is often skipped in fast-moving startups and becomes a source of costly production incidents.

What OAT Tests

Backup and Recovery:

- Data backup runs successfully on schedule

- Backup data can be restored within the agreed RTO (Recovery Time Objective)

- Point-in-time recovery works correctly

Disaster Recovery:

- Failover to secondary region works within the agreed RPO (Recovery Point Objective)

- Failback procedure is documented and tested

- Data integrity is maintained through failover

Monitoring and Alerting:

- All critical services have health checks configured

- Alerts fire correctly when thresholds are breached

- On-call runbooks are up to date and accessible

Deployment and Rollback:

- Deployment procedure is documented and automated

- Rollback to the previous version completes within the agreed window

- Zero-downtime deployment works correctly

Security Operations:

- Audit logs are capturing the required events

- Access controls are correctly configured

- Secrets rotation procedure is documented and tested

OAT Entry Criteria

OAT should not start until:

- UAT has been signed off

- The production environment has been provisioned

- Security review has been completed

- Runbooks and operational procedures have been written

Stakeholder Roles in UAT

UAT involves multiple stakeholders with different responsibilities. Confusion about roles is one of the most common UAT failure modes.

Business Sponsor / Product Owner

Responsibility: Owns the UAT. Defines acceptance criteria. Provides sign-off authority.

Common failure mode: Delegating sign-off to someone without authority to approve production deployment.

UAT Testers (End Users / Business Representatives)

Responsibility: Execute UAT test cases. Report defects. Provide business judgment on edge cases.

Who should do this: The actual people who will use the system day-to-day, or experienced business analysts who deeply understand the workflows.

Who should NOT do this: Developers, QA engineers, or anyone who built the system. They have too much context and will unconsciously avoid the failure modes real users will hit.

UAT Coordinator / Test Manager

Responsibility: Manages the UAT process. Maintains the test schedule, defect log, and status reports. Bridges between business users and development team.

Development Team

Responsibility: Fix defects reported during UAT. Provide technical support to UAT testers. Should not be in the room during test execution.

QA Team

Responsibility: Set up the UAT environment. Assist with test case design. Provide tooling for defect tracking. Should have completed their own testing before UAT begins.

Important distinction: QA completing system testing is a prerequisite for UAT, not a substitute for it.

UAT Tools and Environments

Test Case Management Tools

- Jira with Zephyr or Xray plugins — most common for teams already using Jira

- TestRail — dedicated test management with UAT-specific workflows

- qTest — enterprise-focused with UAT tracking and sign-off features

- Spreadsheets — adequate for small projects with few test cases

Defect Tracking

- Any issue tracker works (Jira, Linear, GitHub Issues)

- Key requirement: business users must be able to log defects without technical knowledge

- Include screenshot/video capture support for non-technical testers

UAT Environment

A dedicated UAT environment separate from development and QA environments. In modern cloud deployments, this is typically an environment that mirrors production infrastructure.

For teams using continuous delivery, UAT often runs against a staging environment that auto-deploys from the main branch.

Test Automation After UAT

Once UAT scenarios are validated manually, the highest-value ones should be automated as regression tests. This prevents future releases from breaking business workflows that have already been accepted.

Tools like HelpMeTest can automate these critical user journeys, running them on every deployment and alerting when a previously accepted workflow breaks. The combination of UAT for initial validation and automated tests for ongoing regression gives teams confidence that every release still meets acceptance criteria.

Common UAT Mistakes

1. Starting UAT Too Late

Mistake: Treating UAT as the last step — scheduling it only after all development is done.

Impact: Last-minute defects delay release, create pressure to skip thorough testing, and compress the UAT window.

Fix: Plan UAT scope and write acceptance criteria at project kickoff. Identify UAT testers and get their availability committed early.

2. Poorly Defined Acceptance Criteria

Mistake: Acceptance criteria written as vague statements like "the system should be easy to use" or "performance should be acceptable."

Impact: Endless debate about whether UAT passed. Business users and developers cannot agree on what "done" looks like.

Fix: Use the Given-When-Then format. Make every criterion testable and measurable.

3. Using the Wrong People as UAT Testers

Mistake: Having developers, QA engineers, or project managers do UAT because real users are "too busy."

Impact: Tests miss the workflows and edge cases that real users encounter. UAT signs off on something users cannot actually use.

Fix: Insist on real end users or experienced business analysts. Partial availability is better than proxy testers.

4. Inadequate UAT Environment

Mistake: Running UAT in a development environment with fake data, missing integrations, or different configurations.

Impact: Bugs that only appear in production are missed. Integration failures discovered post-launch.

Fix: Treat UAT environment setup as a non-negotiable milestone. It must match production.

5. No Defect Triage Process

Mistake: All defects found in UAT are treated as blockers, leading to scope creep and delayed launches.

Impact: Minor cosmetic issues hold up releases. Teams lose confidence in the UAT process.

Fix: Establish a clear defect prioritization scheme (Critical/Major/Minor) and an agreed decision rule about what must be fixed before go-live.

6. No Formal Sign-Off

Mistake: UAT ends with a verbal "it looks good" rather than a formal sign-off document.

Impact: Post-launch disputes about who approved what. No audit trail for compliance.

Fix: Require a written sign-off with named approvers, a summary of what was tested, and a list of any accepted risks.

7. Skipping Regression Testing After Defect Fixes

Mistake: After fixing UAT defects, only retesting the fixed items without checking for regressions.

Impact: Fixes break previously passing scenarios. UAT must be rerun, compressing the timeline further.

Fix: After any defect fix, run a targeted regression pass on related workflows. Automate the critical path scenarios to catch regressions automatically.

FAQ

What is the difference between UAT and system testing?

System testing is done by QA engineers to verify that the integrated system works correctly against technical specifications. UAT is done by business stakeholders to verify that the system meets business requirements. System testing precedes UAT; passing system testing is a prerequisite for starting UAT, not a substitute for it.

Who should perform UAT?

UAT should be performed by the people who will actually use the system — end users, business analysts, or subject matter experts who deeply understand the business workflows. Developers and QA engineers should not perform UAT because they have too much knowledge of the implementation and will unconsciously avoid failure modes real users will encounter.

How long does UAT typically take?

It varies widely. A small feature addition might need 1–2 days. A large enterprise system might need 4–6 weeks of UAT. A common mistake is treating UAT time as elastic — compressing it when development runs late. UAT is not padding in the schedule; it is a critical risk reduction activity.

What happens if UAT fails?

Failed UAT means the software does not meet acceptance criteria. Critical defects must be fixed before go-live. The development team fixes reported defects, they are retested in the UAT environment, and a new UAT cycle (or focused regression pass) is conducted. The business sponsor decides whether remaining defects are acceptable to ship or must be resolved first.

What is the difference between UAT and beta testing?

UAT is typically an internal process with controlled, identified stakeholders who test against specific test cases before release. Beta testing involves external users testing a near-complete product in real-world conditions without scripted test cases. They serve different purposes: UAT validates against requirements; beta testing discovers unexpected usage patterns and real-world edge cases.

What is Operational Acceptance Testing (OAT)?

OAT validates that the system can be operated and maintained in production. It covers backup/recovery, monitoring, deployment procedures, failover, and security operations. UAT validates "does it work for users?" while OAT validates "can we run this in production reliably?"

Can UAT be automated?

The initial UAT execution is typically manual — it requires business judgment, not just pass/fail verification. However, once UAT scenarios are validated, they should be converted into automated regression tests to verify that future releases do not break previously accepted workflows. This is a high-ROI automation investment because these tests directly correspond to business-critical user journeys.

What documents are produced during UAT?

The core UAT documentation set includes: UAT Plan (scope, schedule, resources), UAT Test Cases, UAT Defect Log, UAT Status Reports, and UAT Sign-Off Document. In regulated industries, this documentation is required evidence for compliance audits.